By Keith Tidman

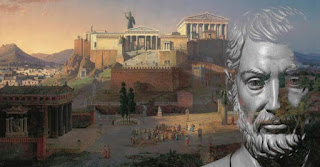

It was in his most-read work, The Republic, written about 380 BCE, that Plato recounted an exchange between Glaucon and Socrates, sometimes called the Allegory of the Cave. Socrates describes how in this cave, seated in a line, are prisoners who have been there since birth, entirely cut off from the outside world. Tightly restrained by chains such that they cannot move, their lived experience is limited to staring at the cave wall in front of them.

What they cannot know is that just behind where they sit is a parapet and fire, in front of which other people carry variously shaped objects, and it is these that cast the strange shadows. The shadows on the wall, and not the fire or the objects themselves, are the prisoners’ only visible reality — the only world they can know. Of the causes of the moving shadows, of the distinction between the abstract and the real, they can know nothing.

Plato asks us to consider what might happen if one of the prisoners is then unchained and forced reluctantly to leave the cave, into the glaring light of the sun. At first, he says, the brightness must obscure the freed prisoner’s vision, so that he can see only shadows and reflections, similar to being in the cave. However, after a while, his eyes would grow accustomed to the light, and eventually he would be able to see other people and objects themselves, not just their shadows. As the former prisoner adjusts, he begins to believe the outside world offers what he construes as a very different, even better reality than the shadows in the dusky cave.

But now suppose, Plato asks, that this prisoner decides to return to the cave to share his experience — to try to convince the prisoners to follow his lead to the sunlight and the ‘forms’ of the outside world. Would they willingly seize the chance? But no, quite the contrary, Plato warns. Far from seizing the opportunity to see more clearly, he thinks the other prisoners would defiantly resist, believing the outside world to be harmful and dangerous and not wanting to leave the security of their cave with the shadows they have become so familiar with, even so expert at interpreting.

The allegory of the cave is part of Plato’s larger theory of knowledge — of ideals and forms. The cave and shadows are representative of how people usually live, often ensconced within the one reality they’re comfortable with and assume to be of greatest good. All the while, they are confronted by having to interpret, adjust to, and live in a wholly dissimilar world. The so-called truth that people meet up with is shaped by contextual circumstances they happened to have been exposed to (their upbringing, education, and experiences, for example), in turn swaying their interpretations, judgments, beliefs, and norms. All often cherished. Change requires overcoming inertia and myopia, which proves arduous, given prevailing human nature.

People may wonder which is in fact the most authentic reality. And they may wonder how they might ultimately overcome trepidation, choosing whether or not to turn their backs to their former reality, and understanding and embracing the alternative truth. A process that perhaps happens again and again. The undertaking, or journey, from one state of consciousness to another entails conflict and requires parsing the differences in awareness of one truth over another, to be edified of the supposed higher levels of reality and to overcome what one might call the deception of perception: the unreal world of blurry appearances..

Some two and a half millennia after Plato crafted his allegory of the cave, popular culture has borrowed the core storyline, in both literature as well as movies. For example, the pilots of both Fahrenheit 451, by Ray Bradbury, and Country of the Blind, by H.G. Wells, concern eventual enlightened awareness, where key characters come to grips with the shallowness of the world with which they’re familiar every day.

Similarly, in the movie The Matrix, the lead character, Neo, is asked to make a difficult choice: to either take a blue pill and continue living his current existence of comfort but obscurity and ignorance, or take a red pill and learn the hard truth. He opts for the red pill, and in doing so becomes aware that the world he has been living in is merely a contrivance, a computer-generated simulation of reality intended to pacify people.

Or take the movie The Truman Show. In this, the lead character, Truman Burbank, lives a suburban, family life as an insurance agent for some thirty years, before the illusion starts to crumble and he suspects his family is made up of actors and everything else is counterfeit. It even turns out that he is living on a set that comprises several thousand hidden cameras producing a TV show for the entertainment of spectators worldwide. It is all a duplicitous manipulation of reality — a deception of perception, again — creating a struggle for freedom. And in this movie, after increasingly questioning the unfathomable goings-on around him, Truman (like the prisoner who leaves Plato’s cave) manages to escape the TV set and to enter the real world.

Perhaps, then, what is most remarkable about the Allegory of the Cave is there is nothing about it that anchors it exclusively to the ancient world in which it was first imagined. Instead, Plato’s cave is, if anything, even more pertinent in the technological world of today, split as it is between spectral appearances and physical reality. Being surrounded today with the illusory shadows of digital technology, with our attention guided by algorithm-steering, belief-reinforcing social media, strikes a warning note. That today, more than ever, it is our responsibility to continually question our assumptions.