People often take delight in saying dolphins are smart. Yet, does even the smartest dolphin in the ocean understand quantum theory? No. Will it ever understand the theory, no matter how hard it tries? Of course not. We have no difficulty accepting that dolphins have cognitive limitations, fixed by their brains’ biology. We do not anticipate dolphins even asking the right questions, let alone answering them.‘Any research that cannot be reduced to actual visual observation is excluded where the stars are concerned…. It is inconceivable that we should ever be able to study, by any means whatsoever, their chemical or mineralogical structure’.A premature declaration of the end of knowledge, made by the French philosopher, Auguste Comte, in 1835.

Some people then conclude that for the same reason — built-in biological boundaries of our species’ brains — humans likewise have hard limits to knowledge. And that, therefore, although we acquired an understanding of quantum theory, which has eluded dolphins, we may not arrive at solutions to other riddles. Like the unification of quantum mechanics and the theory of relativity, both effective in their own dominions. Or a definitive understanding of how and from where within the brain that consciousness arises, and what a complete description of consciousness might look like.

The thinking isn’t that such unification of branches of physics is impossible or that consciousness doesn’t exist, but that supposedly we’ll never be able to fully explain either one, for want of natural cognitive capacity. It’s argued that because of our allegedly ill-equipped brains, some things will forever remain a mystery to us. Just as dolphins will never understand calculus or infinity or the dolphin genome, human brains are likewise closed off from categories of intractable concepts.

Or at least, as it has been said.

Some among these believers of this view have adopted the self-describing moniker ‘mysterians’. They assert that as a member of the animal kingdom, homo sapiens are subject to the same kinds of insuperable cognitive walls. And that it is hubris, self-deception, and pretension to proclaim otherwise. There’s a needless resignation.

After all, the fact that early hominids did not yet understand the natural order of the universe does not mean that they were ill-equipped to eventually acquire such understanding, or that they were suffering so-called ‘cognitive closure’. Early humans were not fixed solely on survival, subsistence, and reproduction, where existence was defined solely by a daily grind over the millennia in a struggle to hold onto the status quo.

Instead, we were endowed from the start with a remarkable evolutionary path that got us to where we are today, and to where we will be in the future. With dexterously intelligent minds that enable us to wonder, discover, model, and refine our understanding of the world around us. To ponder our species’ position within the cosmic order. To contemplate our meaning, purpose, and destiny. And to continue this evolutionary path for however long our biological selves ensure our survival as opposed to extinction at our own hand or by external factors.

How is it, then, that we even come to know things? There are sundry methods, including (but not limited to) these: Logical, which entails the laws (rules) of formal logic, as exemplified by the iconic syllogism where conclusion follow premises. Semantic, which entails the denotative and connotative definitions and context-based meanings of words. Systemic, which entails the use of symbols, words, and operations/functions related to the universally agreed-upon rules of mathematics. And empirical, which entails evidence, information, and observation that come to us through our senses and such tools like those below for analysis, to confirm or finetune or discard hypotheses.

Sometimes the resulting understanding is truly paradigm-shifting; other times it’s progressive, incremental, and cumulative — contributed to by multiple people assembling elements from previous theories, not infrequently stretching over generations. Either way, belief follows — that is, until the cycle of reflection and reinvention begins again. Even as one theory is substituted for another, we remain buoyed by belief in the commonsensical fundamentals of attempting to understand the natural order of things. Theories and methodologies might both change; nonetheless, we stay faithful to the task, embracing the search for knowledge. Knowledge acquisition is thus fluid, persistently fed by new and better ideas that inform our models of reality.

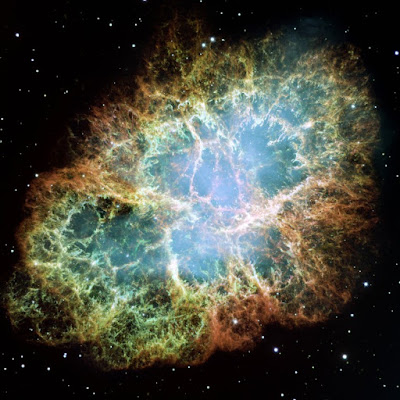

We are aided in this intellectual quest by five baskets of ‘implements’: Physical devices like quantum computers, space-based telescopes, DNA sequencers, and particle accelerators. Tools for smart simulation, like artificial intelligence, augmented reality, big data, and machine learning. Symbolic representations, like natural languages (spoken and written), imagery, and mathematical modeling. The multiplicative collaboration of human minds, functioning like a hive of powerful biological parallel processors. And, lastly, the nexus among these implements.

This nexus among implements continually expands, at a quickening pace; we are, after all, consummate crafters of tools and collaborators. We might fairly presume that the nexus will indeed lead to an understanding of the ‘brass ring’ of knowledge, human consciousness. The cause-and-effect dynamic is cyclic: theoretical knowledge driving empirical knowledge driving theoretical knowledge — and so on indefinitely, part of the conjectural froth in which we ask and answer the tough questions. Such explanations of reality must take account, in balance, of both the natural world and metaphysical world, in their respective multiplicity of forms.

My conclusion is that, uniquely, the human species has boundless cognitive access rather than bounded cognitive closure. Such that even the long-sought ‘theory of everything’ will actually be just another mile marker on our intellectual journey to the next theory of everything, and the next one — all transient placeholders, extending ad infinitum.

There will be no end to curiosity, questions, and reflection; there will be no end to the paradigm-shifting effects of imagination, creativity, rationalism, and what-ifs; and there will be no end to answers, as human knowledge incessantly accrues.